information gain = entropy ( p a r e n t ) − entropy ( c h i l d r e n ) With this knowledge, we may simply equate the information gain as a reduction in noise. A homogenous dataset will have zero entropy, while a perfectly random dataset will yield a maximum entropy of 1. This essentially represents the impurity, or noisiness, of a given subset of data.

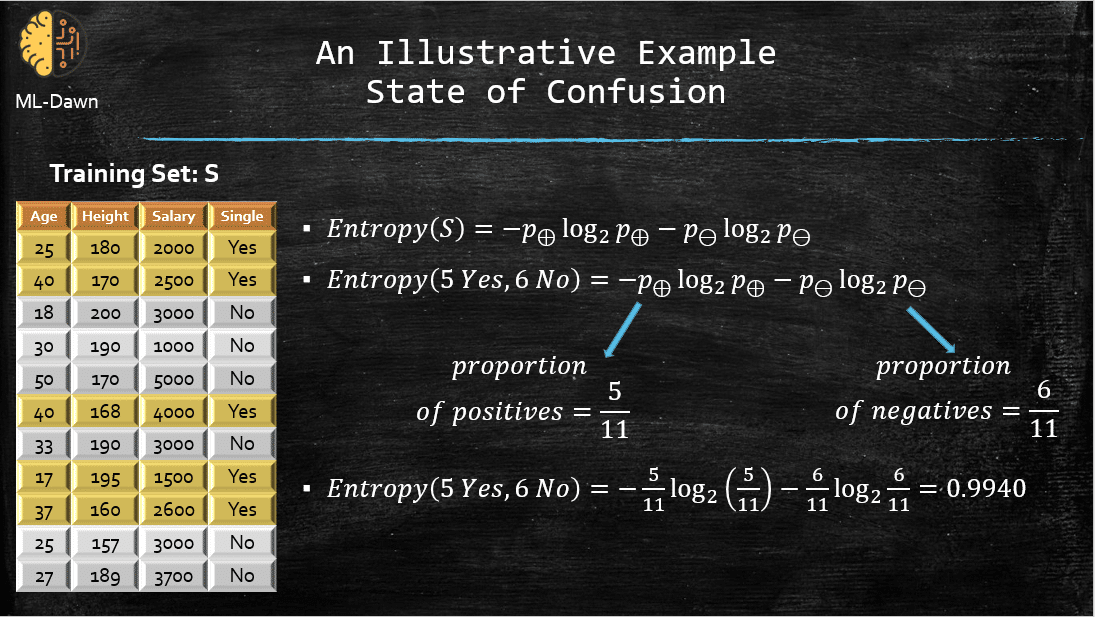

In order to mathematically quantify information gain, we introduce the concept of entropy.Įntropy may be calculated as a summation over all classes where $p_i$ is the fraction of data points within class $i$. However, this would essentially be a useless split and provide zero information gain. Going back to the previous example, we could have performed our first split at $x_1 < 10$. How do we judge the best manner to split the data? Simply, we want to split the data in a manner which provides us the most amount of information - in other words, maximizing information gain. Then, for each subset, we performed additional splitting until we were able to correctly classify every data point. Our initial subset was the entire data set, and we split it according to the rule $x_1 < 3.5$. If you analyze what we're doing from an abstract perspective, we're taking a subset of the data, and deciding the best manner to split the subset further. It'd be much better if we could get a machine to do this for us. So now we have a decision tree for this data set the only problem is that I created the splitting logic. Run through a few scenarios and see if you agree. The goal is to construct a decision boundary such that we can distinguish from the individual classes present.Īny ideas on how we could make a decision tree to classify a new data point as "x" or "o"? Here's what I did. Let's look at a two-dimensional feature set and see how to construct a decision tree from data. This is known as recursive binary splitting.įor those wondering – yes, I'm sipping tea as I write this post late in the evening. Note: decision trees are used by starting at the top and going down, level by level, according to the defined logic. Moreover, you can directly visual your model's learned logic, which means that it's an incredibly popular model for domains where model interpretability is important.ĭecision trees are pretty easy to grasp intuitively, let's look at an example. Decision trees are one of the oldest and most widely-used machine learning models, due to the fact that they work well with noisy or missing data, can easily be ensembled to form more robust predictors, and are incredibly fast at runtime.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed